by Maxim Knepfle, CTO Tygron

Not so long a ago I wrote a blog about the one billion grid cells that we can compute. Today after some major upgrades to the Tygron Platform we achieved to calculate up to 10 billion cells and with the new Ampere GPU generation 64 billion is around the corner.

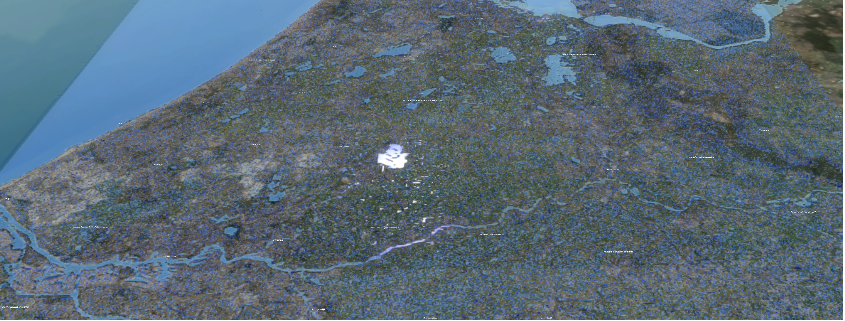

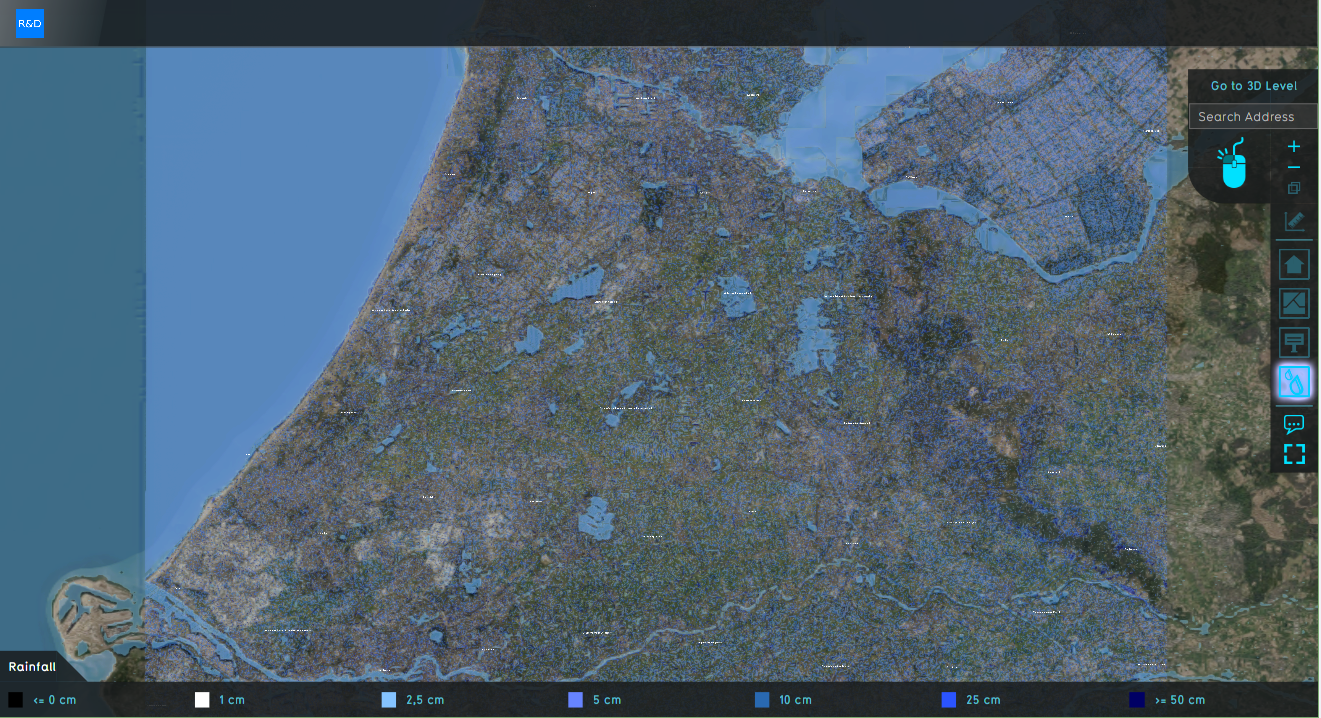

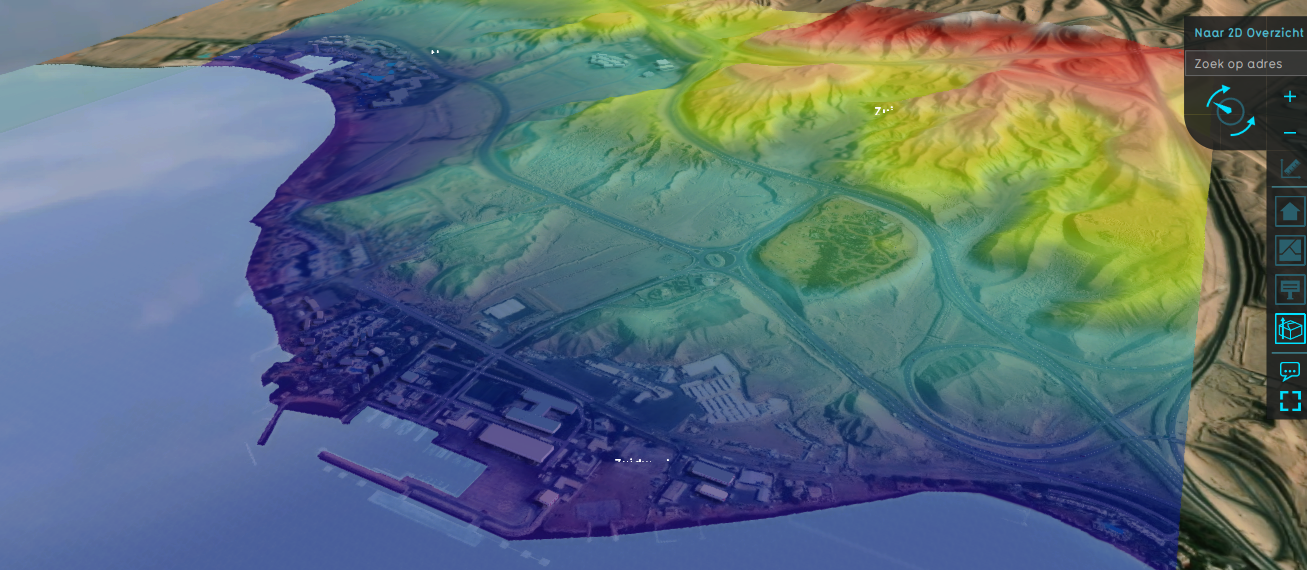

Below an image of the first successful run where we calculated a heavy rain shower (60mm) above the entire western part of the Netherlands, an area of 100x100km at 1x1m resolution. Using rain showers (instead of a flooding) forces all cells to be active and triggers a water flow according to St-Venant equations in every cell.

Calculating this amount of data is one thing, but handling the required amount of data in an efficient way poses new challenges, some of which I describe in this blog entry:

- Addressing: This used to be limited to 2^32= 4 billion cells for 32-bit addresses. The easy way was to use longer addresses (64-bit) but this would slow down performance and is totally useless for most smaller maps. But by rewriting our underlying hybrid-memory addressing code we now have multiple 32-bit address sets. For example one per device, therefore with an eight devices setup we can now handle 32 billion cells in total.

- Block compression: Okay, but we now have large grids slowing down the cloud servers with terabytes of RAM data. To remedy this we added a new block compression system that compresses grid data in a two dimensional way. The reason we created our own compression-algorithm instead of relying on e.g. jpeg is that: first it cannot lose accuracy (lossless compression) and secondly access times must be shortened allowing for on the fly compression / decompression. Using this block compression we now achieve between 30 and sometimes even 90% memory compression compared to the original data.

- 25cm: Having so many cells also allows us to experiment with smaller grid cells, e.g. 25 cm. Most elevation maps are 50cm by default, however new sensor techniques allow for more precise river bed elevation maps. This means that we can now investigate whether this increased elevation map may also improve outcomes (or only slows down calculation).

- Ampere generation: With new addressing architecture we are no longer limited by the software, but only by GPU hardware. We are already testing single Ampere generation GPUs. We expect that a multi GPU system could then safely calculate between 50 tot 64 billion grid cells. This means that very soon we can finally calculate the entire Netherlands (300x200km) at a 1x1m resolution. For example a heavy rain shower stress test for all dutch cities just takes one project to setup and calculate. Or combinations of heavy rain and river floodings can be calculated fully integrated in a single run.

Today more and more data becomes available (e.g. satellite imagery) proving detailed information for every square meter. Combining this with the rapid development of GPU hardware and parallel calculating algorithms makes things possible we never thought of before. With that in mind I hope that in one of my next blogs I can show the entire Netherlands at 1x1m resolution in one single integrated model!

Although this blog might be written with a focus on the Netherlands, know that this technology and setup can be used for all parts of the world where the spacial data is available. It is designed to be plug and play and offers a great variety of different calculation-models like: heat-stress, energy networks, subsidence, biodiversity, traffic noise and many more.