In a previous blog entry we showed that it was possible to calculate a 60x60km map at very high resolution (1m). This is especially useful for small canals and surface rainfall simulations.

However when calculating the flow in large rivers (100m+ width) a resolution of 5m cells should be more then enough. Except that rivers are usually longer then 60km!

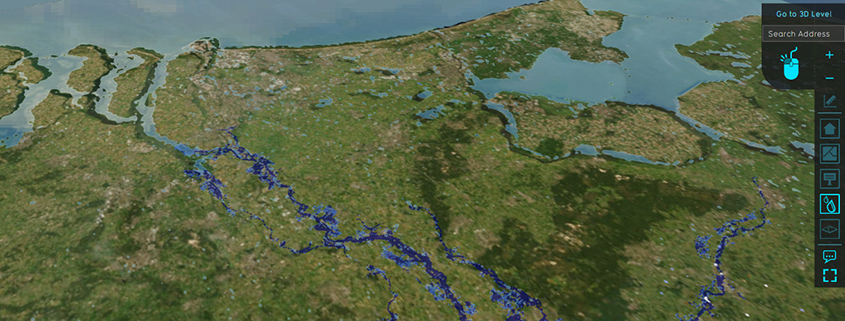

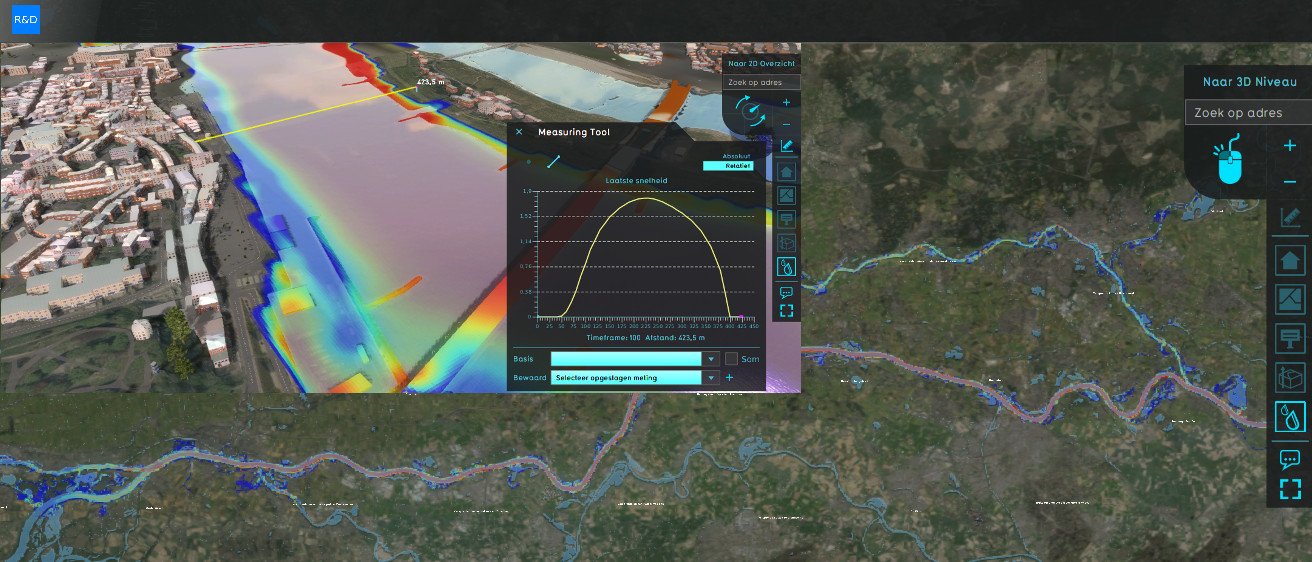

This resulted in an experiment to create a map the size of the Netherlands with all major rivers (Rhine, Meuse and IJssel) interacting with each other in a single simulation model. The model contains 2.000.000.000 grid cells and hundreds of kilometers of river. Calculating River Deltas at such high detail seemed impossible only a few years ago.

To solve this we have incorporated several new features into the Tygron Platform.

- Level of Detail: The Tygron Platform can now load parts of the rectangular shaped project map in higher detail than the rest. When simulating river flow you only need the river polygon and a margin of X kilometers surrounding it for overflow. This part will then be loaded at the usual detail level and an area e.g. 30km away from the river can be loaded at a lower resolution height-map, satellite images, less buildings, etc.

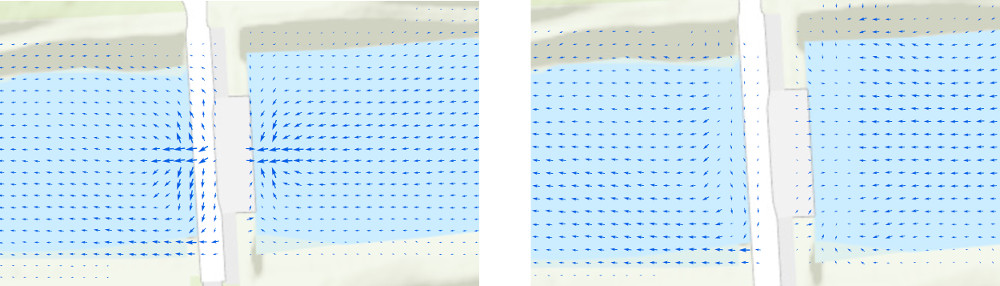

- Inactive Cells: The Tygron Platform uses structured square grid cells for simple and fast GPU processing. This also means that when doing a river simulation a large part of the project map will not be active (no water in the cells). To prevent the GPU from loading the data from memory specific CUDA Blocks can be deactivated. While the deactivation of these cells is done at a very small performance penalty, the performance-increase overall is much higher.

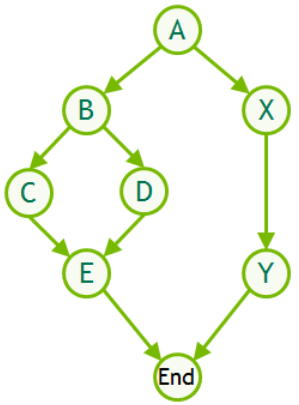

- Graphs: Large simulations do not only contain a large amount of grid cells but due to the explicit nature of our parallel calculation model also a many time-steps to comply with the CFL condition. A typical simulation can have millions of time-steps with for each step 80+ kernel launches over multiple GPU’s. However each kernel launch results in a small overhead to startup. To make this more efficient we now use CUDA Graphs which was recently introduced by NVIDIA. Graphs are specifically designed for time-step based simulations and takeaway most of this overhead.

- Streaming launches: To make the graphs even more efficient we launch batches of 10.000 graphs into a CUDA Stream. When the simulation reaches the endpoint we stop launching new graphs. When the endpoint is halfway a 10.000 batch, the kernels are notified and just do nothing. The overhead of some unused kernel launches is far less than waiting for each single graph to execute.

- River Weirs & Inlets: To simulate the flow of water into the river and exiting into the sea we have introduced a new area based inlet/outlet. When e.g. 3000m3/s of water flow into the river this amount can be spread over a larger area, thus creating a stable input flow by not dumping it all on one single cell. The same principle can now also be used for large river weirs. Depending on the weir width multiple cells are used to take in water on one side and releasing it on multiple cells on the other side.

The major river experiment worked, but also resulted in many new challenges. Water flows through the rivers, but we did not jet include all the detail about the river weirs in the entire Netherlands. But also for example the amount of zoom levels in the Tygron client software was inadequate and the memory requirements for the client application (not server cloud) are very high.

However I think by pushing the limits of the system we will find these bottlenecks faster and we can solve them all over time. Also improving performance on larger maps often results in better performance for smaller maps and low-end client hardware.

Next: the North Sea?